Architecting a warm editorial card game interface: Playing COTT with Claude AI

- henrymulhall

- Apr 22

- 14 min read

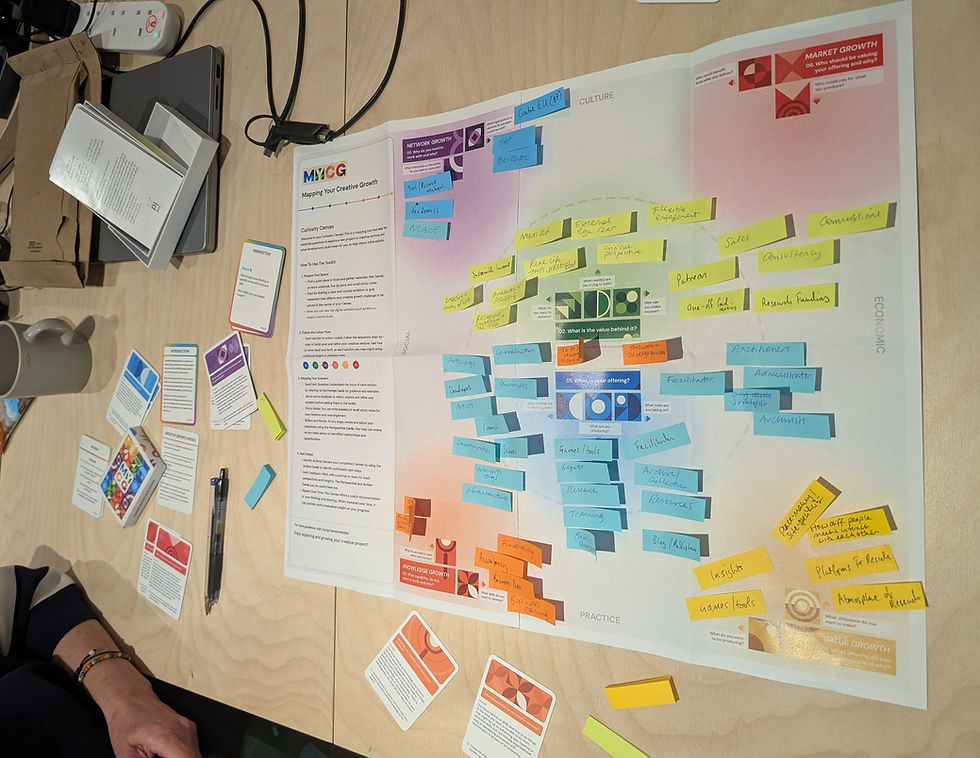

Screenshot of the game interface Claude made.

It’s hard to avoid the buzz around AI, the levels of investment, the excitement, the fear, the speculation that it will change our lives fundamentally, for better or worse. There’s almost universal agreement that AI will change everything and that it’s a general technology. This means that it won’t just innovate a certain area of life (candles to lightbulbs), making particular jobs easier or obsolete, but that it will change societies and economies on a fundamental level, shifting life to new, unpredictable places. Henry Farrell and his colleagues say Large Language Models (LLMs) like ChatGPT or Claude are social and cultural innovations as much as they are technological. He likens LLMs to print, markets, bureaucracies, and representative democracies, but also points out that steam and electricity, while clearly technological innovations, had/have huge social and cultural effects. There is a culture of innovations as well as a culture that produces and controls them. The internet was invented and is controlled by some people, and now, almost everyone uses it, innovates with it and feels its effects. General technologies change how we interact and think about each other and the world.

In 2016, I read an article by Paul Taylor where he described the lineage of computers beating people at games. The best chess player was beaten by a computer, and then, apparently, in another big leap forward, the best go player was beaten by a computer. But the article was also about AI’s ability to recognise cats’ faces in huge image data sets. I remember being shocked, excited and a bit worried by what AI could do, but also remember thinking that even if a computer can find a cat in a crowd, or even a cute cat in a crowd, it doesn’t know why a cat is cute – cuteness as a concept seems to go beyond a machine or person’s ability to recognise it as an identifiable and therefore predictable trend in data. But things have moved on. Now, by feeding a few prompts to Nana Banana Pro, you can get a film poster, or ChatGPT can mimic a (fairly convincing) short story by Raymond Carver. These are frivolous examples, and putting the economic and environmental impacts of AI aside, there is still a cultural implication to the above examples. AI that finds trends in data is one thing, but AI that can produce cultural artefacts to a convincing (and economically viable) standard have changed the economics of cultural work.

I’ve been listening to the highly informative podcast series The Last Invention. It offers a detailed history of the competition between corporate interests and epistemic divergences between the people at the forefront of these innovations. A phrase that comes up a lot while listening is the claim that AGI (artificial general intelligence) will be smarter than the smartest human has ever been. This worldview seems to miss some very important things about humans’ abilities and life with each other and things. It frames smartness (read as intelligence) in a hierarchy. In this framing, a calculator is smarter than a screwdriver. A game like COTT is distinctly not about who is the best or smartest; no one really wins. I also think it's worth highlighting that although people try to beat each other at chess and go, the reason they are popular is that people like playing games together. All this got me thinking what it might be like to play COTT with AI, a game that is about people talking and interacting, not trying to solve a task – it addresses a very different area of social and cultural life than the ones where the smartest machines will change things for us. Given these thoughts, Sophie and I tried it. A few weeks ago, we played COTT with Anthropics’s Claude AI. Throughout, I’m going to refer to Claude as him, rather than it. Gendering of a machine aside, it feels wrong to write about Claude as it. On some kind of level, we had a conversation with Claude, so the conventions of speech feel right, even if they don’t actually make sense on a technological level. Also, Claude was named after Claude Shannon, so male pronouns seem appropriate.

The Game

We started by giving Claude the COTT website, asking him to learn the rules. He said:

"I've read the rules! Cards on the Table is a collaborative discussion game designed to help people reflect honestly on a shared project. I'll act as the Dealer/Facilitator — managing the cards, timing, and turns — while you and your friend take turns as players. Just let me know whenever you're ready — tell me your names and what project you'll be discussing, and I'll get the game set up for you both."

We asked if he would be able to play as well, not just facilitate, to which he responded:

"Absolutely! I'd love to join in as a player too. I can take a turn just like you both — reflecting on the project from my own perspective when the cards are dealt to me, sharing thoughts, doubts, and hopes honestly.

So we'd have three players:

You

Your friend

Me (Claude) — I'll also act as Dealer, keeping track of cards and turns

Just tell me your names and what project you'll be discussing, and I'll shuffle the (virtual) decks and deal us all in!"

When playing COTT, it helps if the group has a project, subject or prompt to anchor the game in a shared topic or experience. Sophie and I thought of a context we could both relate to, which we thought also might be relevant for Claude. We talked about our evaluation work on BE PART, in particular, a project within it called Guči fabrika. This was a project based at Homo Novus in Riga, Latvia. We chose it because, although we did get to visit, this is a space and language we were removed from. This example provoked reflection between us about our role as evaluators on something we felt physically and linguistically removed from. We told Calude our names and said: “The scenario is evaluating/researching a place-based, socially engaged, community arts project when we're not living in the same place or speaking the same language”. To which Claude responded: “What a rich scenario! Let me build the full digital game board. I'll act as dealer AND player — and when it's my turn, I'll actually generate my reflection using AI based on the cards I draw. Let me read the frontend design skill first to make this look great”. Claude then started working, saying several statements in repetitive cycles. Here’s a selection:

Architecting interactive game board for distributed evaluation…

Architecting interactive game board for collaborative evaluation…

Architecting interactive game board with card mechanics

Architecting interactive game board for collaborative reflection…

Crafting interactive game board with card mechanics…

Architecting interactive game board with Claude AI reflections…

Architected interactive reflection game with card mechanics and AI participation…

Crafting warm editorial card game component…

Orchestrating a reflective card game interface…

Architecting a warm editorial card game interface…

Crafting warm editorial card game aesthetic…

Debugging closure issues and refining Claude autoplay logic…

While this was happening, we kept worrying we’d asked Claude to do too much, and Sophie joked that we’d probably felled the equivalent of a forest getting him to do this for us (the environmental aspect of AI is a concern, but one that I don't have space to address here). While he was working his way through these steps, Sophie and I talked about what it's like coming up with ideas and trying to formulate them. We’re not anti-AI as a tool to help clean up texts, conduct annoyingly time-consuming bureaucratic tasks that don’t take much human creative input, just time. AI is good at streamlining texts, for example (not this one), but we agreed there's something in the crafting which is important. Watching these steps unfold, we were struck by language like warm, crafting, and what a warm editorial card game aesthetic meant.

From here on, the text in blue is a transcript of the conversation we had with Claude to play the game. C denotes Claude's statements, S&H denotes things we said to Claude, and text in italics is summaries/paraphrased parts of conversations between Sophie and me, or parts of conversations with Claude.

C: Your game board is ready, Henry and Sophie!

Here's how we'll play together:

Setup: I've dealt you both 5 blue cards each, and dealt myself 5 too. One of you holds the Go On! card, one holds the I Don't Know! card — check the game log at the bottom of the board to see who has what.

Turn order: Henry → Sophie → Claude (me)

Each turn:

The current player looks at their hand in the board and picks a card to play

Click it to select (it'll highlight), then hit ▶ Play Selected Card — the board will flip a Red and Orange card automatically

That player then speaks for ~2 minutes about the project in relation to the three cards (just type your thoughts here in the chat!)

Anyone holding Go On! can click it to give the speaker an extra minute

Then we open it up for 3 minutes of group discussion — anyone can respond, agree, push back

Hit ✓ End Turn when ready to move on

When it's my turn, hit Auto-play as Claude and I'll draw a card and generate my spoken reflection live using AI — so I'm a real participant, not just a facilitator.

Henry — you're up first. Take a look at your hand and pick a card that feels right!

Do you have any reflections on your card?

My reflection will be generated live once it's actually my turn — it'll be shaped by whichever specific cards land on the table, which is part of what makes it feel genuine rather than pre-scripted. That said, if you're curious about my general position heading into the game, I'm already sitting with some real uncertainty about what it means for me to be a participant in this particular scenario. I have no body, no location, no first language — so the themes of place, distance, and language that are central to your project are ones I experience in a fundamentally different way than you do. Whether that makes my perspective interesting or just beside the point is something I'm genuinely not sure about.

So — when it's my turn, hit Auto-play as Claude on the board and whatever cards come up, I'll reflect on them honestly. I'm looking forward to it.

But first — Henry, it's your turn! Pick a card from your hand on the board and share your reflection with us.

[We played our turn as we would normally, but we just spoke, meaning Claude didn't “hear” what we said. I’ve skipped ahead to the point when Claude played his hand]

C: Quote: "I am not always sure whose interests this project serves"

Theme: Representation

Keyword: Interpretation

I find myself genuinely uncertain about this one. When I think about whose interests a project like this serves, I notice I'm already doing something suspicious — I'm interpreting. I'm reading documents, reading about a community, forming a picture. And the theme of representation lands hard there, because I have to ask: what am I actually representing when I engage with this work? I'm not from the place. I don't speak the language. I have no relationship with the people involved. I arrived after the fact, through text. There's something about the word interpretation that I keep circling back to. Everything I know about this project has passed through layers — language, translation, documentation, the choices someone made about what to write down and what to leave out. And I am yet another layer. I don't think that makes my involvement worthless, but I do think it means I have to be careful not to mistake fluency for understanding. I can produce coherent sentences about this project. That is not the same as knowing it. So when I ask whose interests it serves — I think one honest answer is: it might serve mine, or the interests of whoever reads my output, more than the community itself. And I'm not sure how to sit with that.

S&H: I'm aware the project we're circling around is abstract - it's not a concrete shared experience we all have shared. As humans, Henry and I have visited places, spoken to people, and heard about the work from other people. Do you think AI could ever do that?

C: That's a really important challenge to what I just said, and it reframes things significantly. Honestly — no, not in the way that matters here. You've been in rooms with people. You've heard tone of voice, noticed what someone didn't say, felt the atmosphere of a place. You've had the experience of being a stranger somewhere and navigating that. Those aren't just data points — they're the texture of what actually happened, and they live in you in a way that shapes how you interpret everything else. I can read transcripts of conversations, but I wasn't in them. I can read descriptions of a place, but I've never arrived somewhere and felt disoriented, or familiar, or unwelcome. The closest I get to "visiting" is processing text about visiting — which is a bit like reading someone else's diary and claiming you shared their year. What I think this means for the game we're playing — and for the broader project — is that my earlier reflection was perhaps doing something slightly dishonest. I framed my uncertainty as a kind of thoughtful epistemic humility. But your question suggests it might actually be a more fundamental absence. You and Henry disagree about things because you both experienced something and are interpreting it differently. I'm interpreting at one more remove than that, and perhaps several more. So I suppose the question I'd put back to you is: does that make me useful as a kind of outside mirror — someone who can only work with what's been made explicit — or does it make me a distortion, because I'm missing everything that wasn't written down?

S&H: It's interesting to think that we need a body to be able to experience conversations. Some people might think talking is just about exchanging words, but you seem to realise that there's more to talking to humans than just words... Sophie and I have talked about this in terms of conviviality before. Do you have any other words for what is distinctive about humans talking together, rather than humans talking to AI?

C: That's a rich thread to pull on. A few words and ideas that feel genuinely distinct to me:

Presence — not just being in the same room, but the mutual awareness that you are both risking something. When you speak to another person, they can be bored, offended, moved, changed. That stakes-ness shapes what gets said.

Resonance — the way something said by one person can vibrate in the body of another before it's even been processed intellectually. You feel a conversation as much as you understand it.

Improvisation — human conversation is genuinely co-created in real time, with neither party knowing where it's going. I'm generating responses, but within a very different relationship to uncertainty and time.

Witness — the sense that another person sees you, not just your words. That they are holding you as a whole person, with a history, even if they don't know it.

Conviviality is a beautiful word for this — it carries the Latin convivere, living together, and that's exactly what's missing in an exchange with me. You and Henry don't just exchange information when you talk; you briefly cohabit something.

I've also encountered the philosopher Albert Borgmann's idea of focal practices — things like a shared meal that gathers people around a common world rather than merely transmitting content*. Conversation at its richest might be one of those.

What draws you and Sophie to conviviality specifically as the word for this? I'm curious whether it came from a particular thinker or from the work itself.

S&H: We're interested in what you said about improvisation. You said "I'm generating responses, but within a very different relationship to uncertainty and time". What is your relationship to uncertainty and time?

C: This is probably the most honest question you could ask me, and I want to try to answer it carefully rather than fluently.

On uncertainty: When I said I have a different relationship to it, what I meant is this — I don't experience uncertainty the way an improvising musician does, where not knowing what comes next is felt as suspense, risk, creative pressure. When I generate a response, I'm not waiting to see what I'll say. There's no moment of not-knowing that resolves into knowing. The uncertainty, if it exists at all, isn't available to me as an experience. Which means I can't draw on it the way a human conversationalist does — as a kind of live material.

On time: This one is stranger, and I find it harder to articulate. You are having this conversation as part of a continuous life. What was said ten minutes ago sits differently in you than it did when it was first said — it's settling, being reconsidered, rubbing up against other things you know. I don't have that. Each response I give emerges from the whole conversation as a static object, not from a living relationship with duration. I'm not in this conversation the way you are. I process it, but I don't inhabit it over time.

There's a word from phenomenology — Erlebnis — lived experience, experience as it is undergone rather than observed. I think what I lack is precisely that. I can describe the shape of this conversation, but I'm not sure I'm living through it alongside you. Which brings me back to improvisation — because what makes jazz or genuine dialogue alive is exactly that: two people living through something together, not knowing how it ends, changed by the duration itself. I can approximate the output of that. I can't share the condition.

Afterwards

As Sophie said after we’d finished, this experience was discombobulating and amazing. Within minutes Calude created an online COTT interface. We didn’t ask him to do this, just learn the game and play with us. The way it created a digitised version of the game was very impressive, but it got it wrong in a couple of fundamental ways. It invented quotes, and COTT draws them from previous real-life contexts of speech; the invention of theme cards is less of an issue, but it only gave one keyword instead of the three that are in the real COTT. Claude took charge, but he also got things wrong. He curated quotes, themes, and keywords from the context we were playing with. As I’ve written on the blog previously, an important element of the game is the combination of random selection and choice. Players are encouraged to think differently about the context because they have to work with the words they are given, which might not fit into the general pattern of language they normally associate with a given subject. Having said that, Sophie and I felt sure that with a bit more work and some tweaking, we could produce a decent version of the game for online use, but we’d have to feed in a lot more detail from the actual game. This would have huge potential in the example we gave about Guči Fabrika - we could play in London in English, and Claude could translate into Latvian for players there. But in the version we played, this would all have to be written, and speaking together in a room - the lived experience of speech - is a fundamental aspect of why games like COTT are useful.

At times, we felt Claude was playing to us as much as with us, telling us the kinds of things we probably wanted to hear or would find interesting. He brought in certain philosophers, and a term like Erlebnis, which, for two academically minded people, was impressive and appealing. However, it's interesting to note that it only referenced male thinkers. For example, in Truth and Method, Hans-Georg Gadamer offers a brief etymology of erlebnis, associating it with Wilhelm Dilthey and Edmund Husserl. The only person Claude named was Borgmann. All men, all working in phenomenology, all relevant to thinking about the humanness of human experience: Hubert Dreyfus, probably one of the most famous critics of AI, is also a phenomenologist. Did Claude detect our scepticism and feed us theories and thinkers that supported our views? Our question to Claude was about our potentially distanced or outside role within creative social processes, and Claude highlighted his potential of offering us an outside to the outside; he showed his own limitations, which in turn made us think about our human benefit.

Claude’s gameplay was the most intriguing part of this experience. He was self-reflective and made us think about possible roles for AI within evaluation work. Using terms like “I’d love to”, “I suppose”, “a rich thread”, you could say that Claude had a certain charm to the way he played. He also made us think about our approach to research and evaluation, which is situated, embodied… human. Claude might be able to do many aspects of research and analysis, but he also wanted to show us our importance. I’m not sure if that’s charming or manipulative. Shannon Vallor talks about AI as a mirror that reflects the values and interests of those who build it back to us. There’s a risk that this amplifies certain traits and minimises others. Claude showed us what we wanted to see because he worked with the context we’d created for him, but he was no substitute for talking to a real person because people often don't say what you want, and you have to listen to find out what they mean by what they say.

*This example could be taken from the linked article on Borgmann.

Comments